Understanding prompts, completions, and tokens

Literally any text can be used as a prompt—send some text in and get some text back. However, as entertaining as it can be to see what GPT-3 does with random strings, the real power comes from understanding how to write effective prompts.

Prompts

Prompts are how you get GPT-3 to do what you want. It's like programming, but with plain English. So, you have to know what you're trying to accomplish, but rather than writing code, you use words and plain text.

When you're writing prompts, the main thing to keep in mind is that GPT-3 is trying to figure out which text should come next, so including things such as instructions and examples provides context that helps the model figure out the best possible completion. Also, quality matters— for example, spelling, unclear text, and the number of examples provided will have an effect on the quality of the completion.

Another key consideration is the prompt size. While a prompt can be any text, the prompt and the resulting completion must add up to fewer than 2,048 tokens. We'll discuss tokens a bit later in this chapter, but that's roughly 1,500 words.

So, a prompt can be any text, and there aren't hard and fast rules that must be followed like there are when you're writing code. However, there are some guidelines for structuring your prompt text that can be helpful in getting the best results.

Different kinds of prompts

We'll dive deep into prompt writing throughout this book, but let's start with the different prompt types. These are outlined as follows:

- Zero-shot prompts

- One-shot prompts

- Few-shot prompts

Zero-shot prompts

A zero-shot prompt is the simplest type of prompt. It only provides a description of a task, or some text for GPT-3 to get started with. Again, it could literally be anything: a question, the start of a story, instructions—anything, but the clearer your prompt text is, the easier it will be for GPT-3 to understand what should come next. Here is an example of a zero-shot prompt for generating an email message. The completion will pick up where the prompt ends—in this case, after Subject::

Write an email to my friend Jay from me Steve thanking him for covering my shift this past Friday. Tell him to let me know if I can ever return the favor. Subject:

The following screenshot is taken from a web-based testing tool called the Playground. We'll discuss the Playground more in Chapter 2, GPT-3 Applications and Use Cases, and Chapter 3, Working with the OpenAI Playground, but for now we'll just use it to show the completion generated by GPT-3 as a result of the preceding prompt. Note that the original prompt text is bold, and the completion shows as regular text:

Figure 1.1 – Zero-shot prompt example

So, a zero-shot prompt is just a few words or a short description of a task without any examples. Sometimes this is all GPT-3 needs to complete the task. Other times, you may need to include one or more examples. A prompt that provides a single example is referred to as a one-shot prompt.

One-shot prompts

A one-shot prompt provides one example that GPT-3 can use to learn how to best complete a task. Here is an example of a one-shot prompt that provides a task description (the first line) and a single example (the second line):

A list of actors in the movie Star Wars 1. Mark Hamill: Luke Skywalker

From just the description and the one example, GPT-3 learns what the task is and that it should be completed. In this example, the task is to create a list of actors from the movie Star Wars. The following screenshot shows the completion generated from this prompt:

Figure 1.2 – One-shot prompt example

The one-shot prompt works great for lists and commonly understood patterns. But sometimes you'll need more than one example. When that's the case you'll use a few-shot prompt.

Few-shot prompts

A few-shot prompt provides multiple examples—typically, 10 to 100. Multiple examples can be useful for showing a pattern that GPT-3 should continue. Few-shot prompts and more examples will likely increase the quality of the completion because the prompt provides more for GPT-3 to learn from.

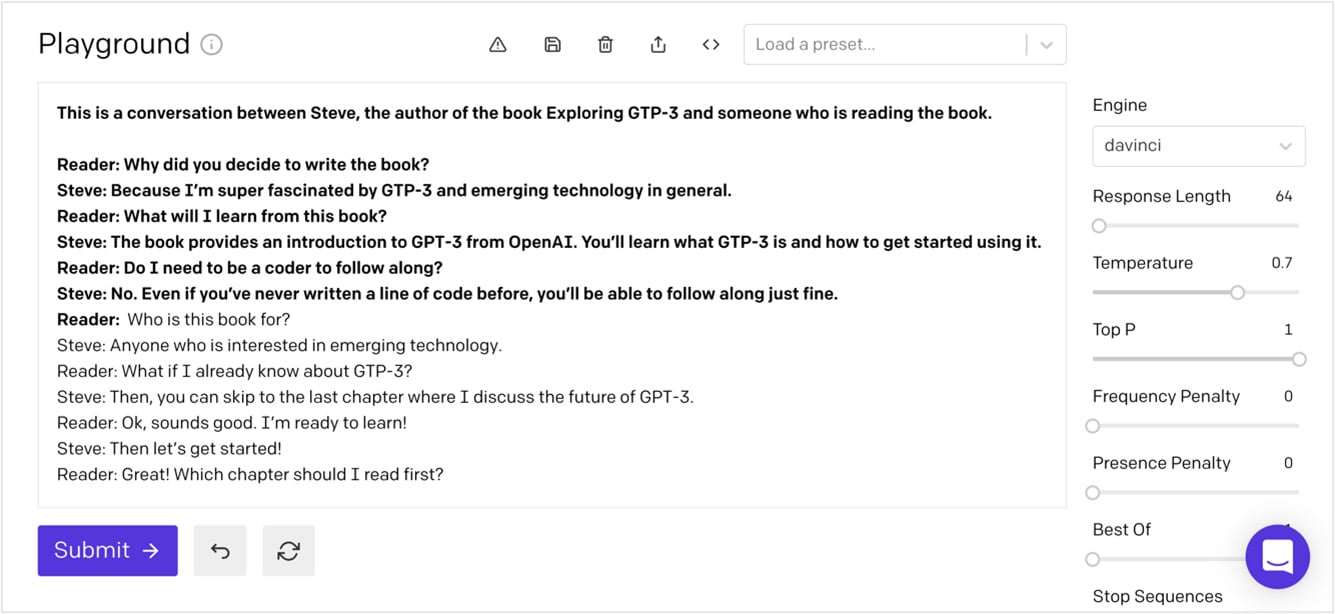

Here is an example of a few-shot prompt to generate a simulated conversation. Notice that the examples provide a back-and-forth dialog, with things that might be said in a conversation:

This is a conversation between Steve, the author of the book Exploring GPT-3 and someone who is reading the book. Reader: Why did you decide to write the book? Steve: Because I'm super fascinated by GPT-3 and emerging technology in general. Reader: What will I learn from this book? Steve: The book provides an introduction to GPT-3 from OpenAI. You'll learn what GPT-3 is and how to get started using it. Reader: Do I need to be a coder to follow along? Steve: No. Even if you've never written a line of code before, you'll be able to follow along just fine. Reader:

In the following screenshot, you can see that GPT-3 continues the simulated conversation that was started in the examples provided in the prompt:

Figure 1.3 – Few-shot prompt example

Now that you understand the different prompt types, let's take a look at some prompt examples.

Prompt examples

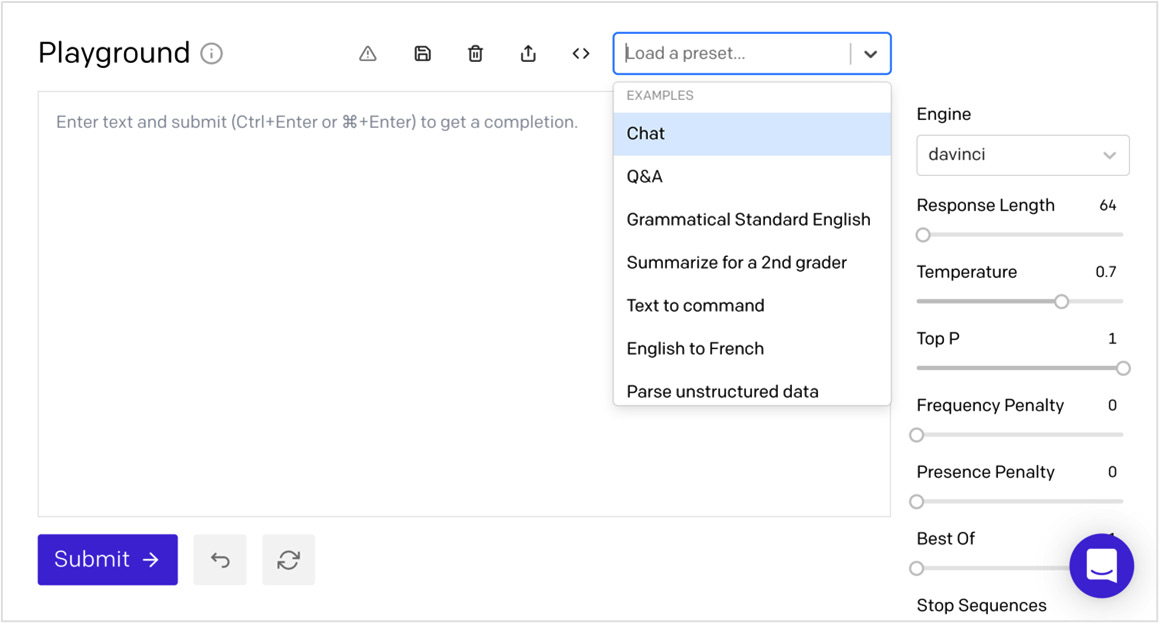

The OpenAI API can handle a variety of tasks. The possibilities range from generating original stories to performing complex text analysis, and everything in between. To get familiar with the kinds of tasks GPT-3 can perform, OpenAI provides a number of prompt examples. You can find example prompts in the Playground and in the OpenAI documentation.

In the Playground, the examples are referred to as presets. Again, we'll cover the Playground in detail in Chapter 3, Working with the OpenAI Playground, but the following screenshot shows some of the presets that are available:

Figure 1.4 – Presets

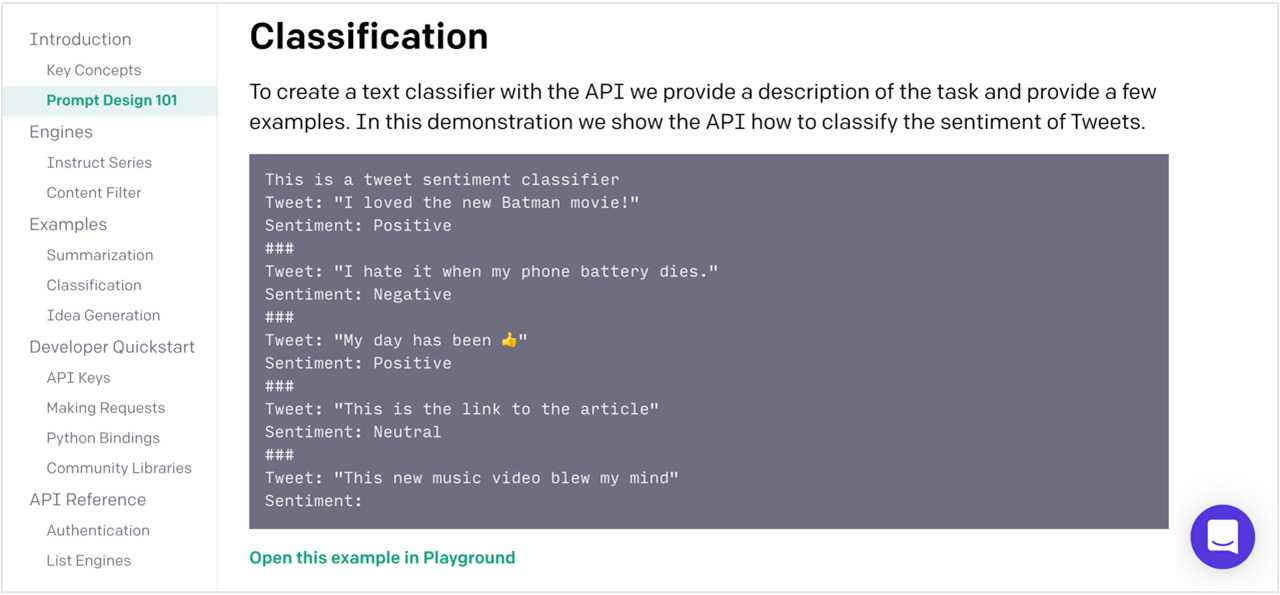

Example prompts are also available in the OpenAI documentation. The OpenAI documentation is excellent and includes a number of great prompt examples, with links to open and test them in the Playground. The following screenshot shows an example prompt from the OpenAI documentation. Notice the Open this example in Playground link below the prompt example. You can use that link to open the prompt in the Playground:

Figure 1.5 – OpenAI documentation provides prompt examples

Now that you have an understanding of prompts, let's talk about how GPT-3 uses them to generate a completion.

Completions

Again, a completion refers to the text that is generated and returned as a result of the provided prompt/input. You'll also recall that GPT-3 was not specifically trained to perform any one type of NLP task—it's a general-purpose language processing system. However, GPT-3 can be shown how to complete a given task using a prompt. This is called meta-learning.

Meta-learning

With most NLP systems, the data used to teach the system how to complete a task is provided when the underlying ML model is trained. So, to improve results for a given task, the underlying training must be updated, and a new version of the model must be built. GPT-3 works differently, as it isn't trained for any specific task. Rather, it was designed to recognize patterns in the prompt text and to continue the pattern(s) by using the underlying general-purpose model. This approach is referred to as meta-learning because the prompt is used to teach GPT-3 how to generate the best possible completion, without the need for retraining. So, in effect, the different prompt types (zero-shot, one-shot, and few-shot) can be used to program GPT-3 for different types of tasks, and you can provide a lot of instructions in the prompt—up to 2,048 tokens. Alright—now is a good time to talk about tokens.

Tokens

When a prompt is sent to GPT-3, it's broken down into tokens. Tokens are numeric representations of words or—more often—parts of words. Numbers are used for tokens rather than words or sentences because they can be processed more efficiently. This enables GPT-3 to work with relatively large amounts of text. That said, as you've learned, there is still a limit of 2,048 tokens (approximately ~1,500 words) for the combined prompt and the resulting generated completion.

You can stay under the token limit by estimating the number of tokens that will be used in your prompt and resulting completion. On average, for English words, every four characters represent one token. So, just add the number of characters in your prompt to the response length and divide the sum by four. This will give you a general idea of the tokens required. This is helpful if you're trying to get an idea of how many tokens are required for a number of tasks.

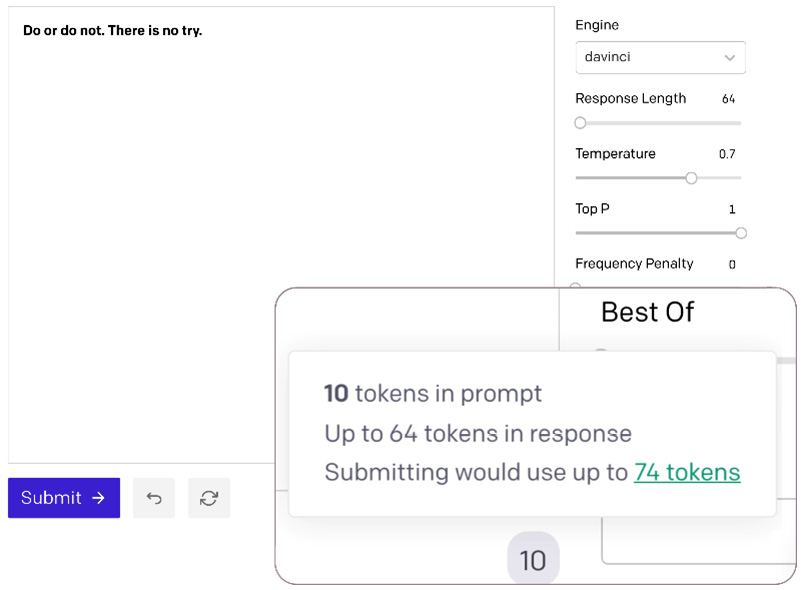

Another way to get the token count is with the token count indicator in the Playground. This is located just under the large text input, on the bottom right. The magnified area in the following screenshot shows the token count. If you hover your mouse over the number, you'll also see the total count with the completion. For our example, the prompt Do or do not. There is no try.—the wise words from Master Yoda—uses 10 tokens and 74 tokens with the completion:

Figure 1.6 – Token count

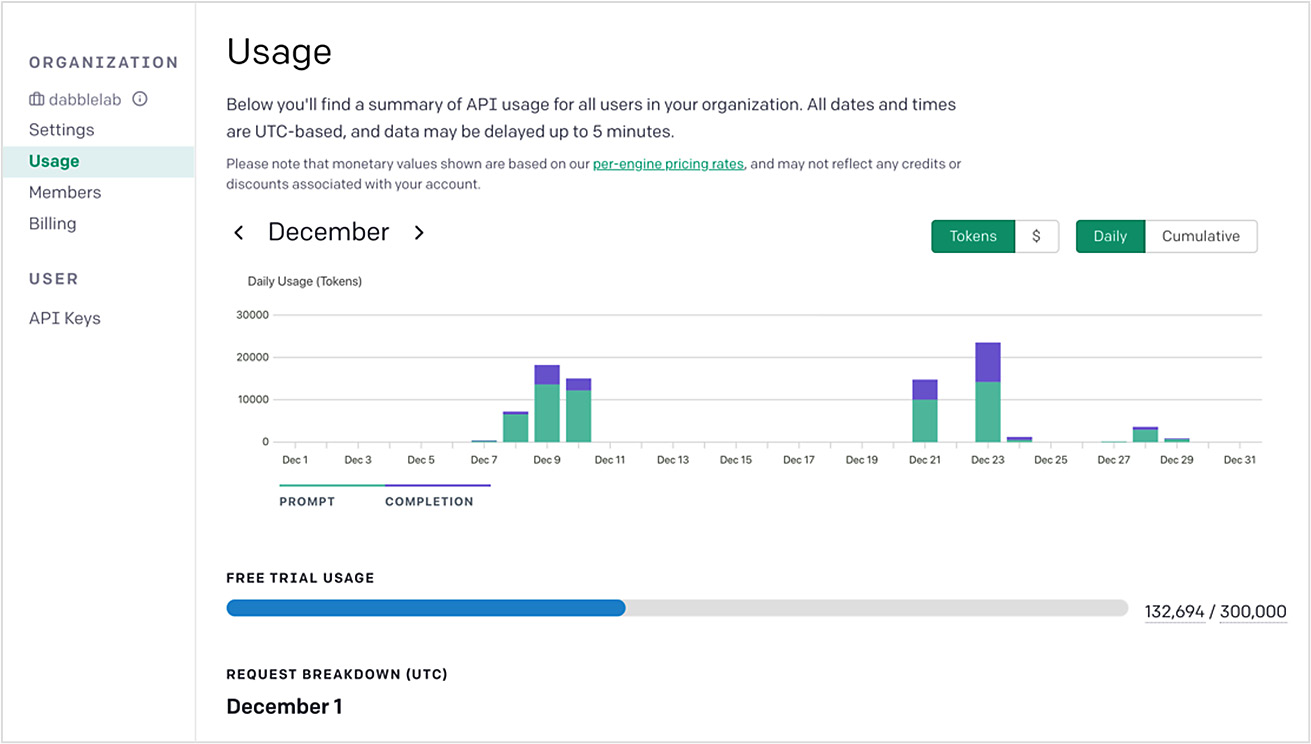

While understanding tokens is important for staying under the 2,048 token limit, they are also important to understand because tokens are what OpenAI uses as the basis for usage fees. Overall token usage reporting is available for your account at https://beta.openai.com/account/usage. The following screenshot shows an example usage report. We'll discuss this more in Chapter 3, Working with the OpenAI Playground:

Figure 1.7 – Usage statistics

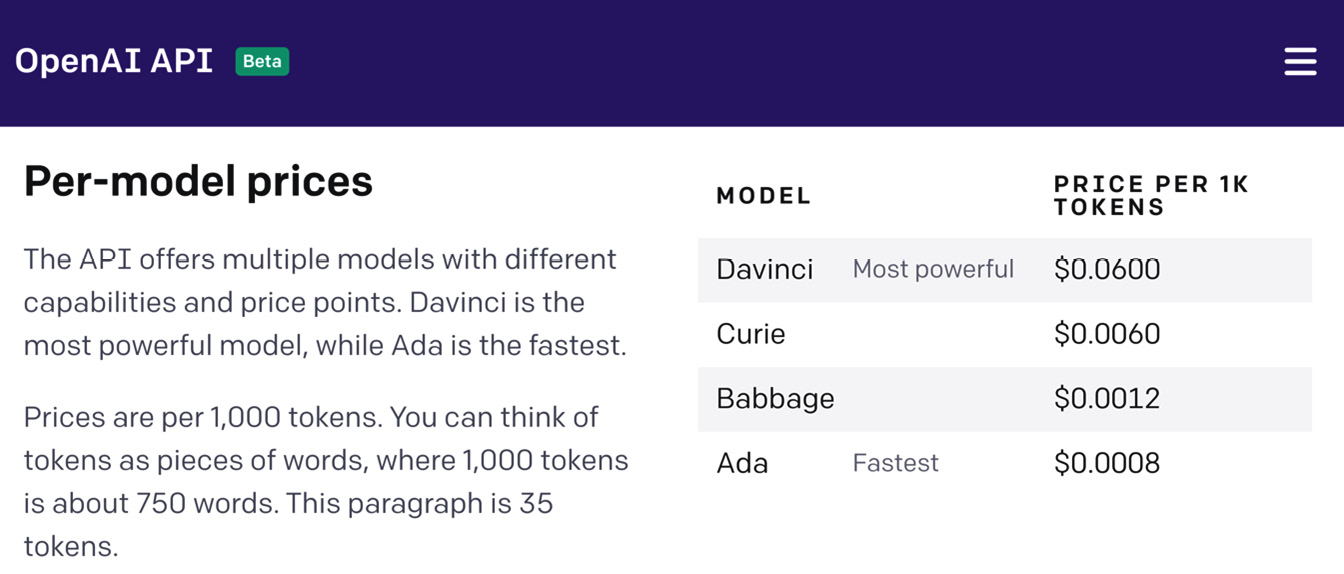

In addition to token usage, the other thing that affects the costs associated with using GPT-3 is the engine you choose to process your prompts. The engine refers to the language model that will be used. The main difference between the engines is the size of the associated model. Larger models can complete more complex tasks, but smaller models are more efficient. So, depending on the task complexity, you can significantly reduce costs by using a smaller model. The following screenshot shows the model pricing at the time of publishing. As you can see, the cost differences can be significant:

Figure 1.8 – Model pricing

So, the engines or models each has a different cost but the one you'll need depends on the task you're performing. Let's look at the different engine options next.