Managing tables

This recipe shows you how to create a table and insert data to explain different behaviors in storing data. Here you will be introduced to the different options that are available from a life cycle perspective, such as tables being permanent, temporary, volatile, and so on. Most of the concepts are not new, so the focus is going to be on the specifics related to Snowflake. We will start with a simple example that creates a table. We shall insert some sample data into it and then try out different variations on creating tables in Snowflake.

Getting ready

The following examples can be run either via the Snowflake web UI or the SnowSQL command-line client.

How to do it…

Let's start by creating a table for storing customer data. We shall start with the DDL statement for creating a table:

- The following example DDL statement will be executed to create a table called

CUSTOMERSin Snowflake:CREATE TABLE customers ( id INT NOT NULL, last_name VARCHAR(100) , first_name VARCHAR(100), email VARCHAR(100), company VARCHAR(100), phone VARCHAR(100), address1 VARCHAR(150), address2 VARCHAR(150), city VARCHAR(100), state VARCHAR(100), postal_code VARCHAR(15), country VARCHAR(50) );

The command should successfully execute, generating the following message:

Figure 2.11 – Table created successfully

- To confirm that the table has been generated as per the specification, we can run a

DESCRIBE TABLEstatement a shown:DESCRIBE TABLE customers;

It should generate the following results in the Snowflake web UI:

Figure 2.12 – Output of the DESCRIBE statement

- Let's assume there was something wrong with a data type; for example, say

ADDRESS1andADDRESS2were supposed to be stored as aSTRINGdata type. This can be addressed using theREPLACE TABLEstatement, along withCREATE. This will overwrite the existingCUSTOMERStable:CREATE TABLE customers ( id INT NOT NULL, last_name VARCHAR(100) , first_name VARCHAR(100), email VARCHAR(100), company VARCHAR(100), phone VARCHAR(100), address1 STRING, address2 STRING, city VARCHAR(100), state VARCHAR(100), postal_code VARCHAR(15), country VARCHAR(50) );

- Let's verify whether the desired change has been successfully applied. For that, we can execute the

DESCRIBE TABLEstatement again as shown:DESCRIBE TABLE customers;

It should generate the following result in the Snowflake web UI:

Figure 2.13 – Output of the DESCRIBE statement after the changes in the table

Please note the data types. We shall discuss this in the How it works… section along with how

REPLACEworks and how it is a shorthand for a two-step process typically required for managing the life cycle of a table. - Let's now load this table with some data before we continue with the rest of the recipe. To do so, run the following command:

COPY INTO customers FROM s3://snowflake-cookbook/Chapter02/r3/customer.csv FILE_FORMAT = (TYPE = csv SKIP_HEADER = 1 FIELD_OPTIONALLY_ENCLOSED_BY = '"');

This should complete with the following message:

Figure 2.14 – Table loaded

- Let's now look at scenarios where the creation of a table is based on an existing table. A common pattern in the table life cycle involves deriving a table from an existing table. This is called

CTASorCREATE TABLE ... AS SELECT. To explain the use ofCTAS, we will have some records inserted into theCUSTOMERStable. The dataset has 100 rows, as shown in the following screenshot (showing the first six columns of the table):

Figure 2.15 – Sample data shown for the customer table

- The dataset will be copied into a new table that will have the same structure as the

CUSTOMERStable. The statements to be executed are as follows:CREATE OR REPLACE TABLE customers_deep_copy AS SELECT * FROM customers;

This should succeed with the following message:

Figure 2.16 – Deep copy table created

What we now have created is a deep copy of the

CUSTOMERStable and a new table, which has received all data fromCUSTOMERSand is calledCUSTOMERS_DEEP_COPY. The deep copy means that the table structure and data has been copied, as opposed to a shallow copy, which would copy the table structure only. This copied table is now an independent copy that can have a life cycle of its own with no changes or side effects originating from any operations performed on theCUSTOMERStable. ASELECT QUERYstatement on this new table would generate the same results as shown in the previous table. - Now let's look at another table copying method – shallow copy. In certain situations, a new table is required to be created with the same structure as an existing table, but the data is not to be copied, in which case a shallow copy is created. This is achieved by using the

LIKEkeyword as provided by Snowflake:CREATE OR REPLACE TABLE customers_shallow_copy LIKE customers;

This should succeed with the following message:

Figure 2.17 – Shallow copy table created

- This should create a table that has the same structure as the

CUSTOMERStable but with no data in it:SELECT COUNT(*) FROM customers_shallow_copy;

The result of the following count query is 0 rows, as shown:

Figure 2.18 – New empty table created

- Until now, we have seen the creation of tables with permanent life – the table will exist and store data until explicitly dropped. This is the default behavior from a life cycle perspective. There are tables that handle transitory data and so they might be required only temporarily. For such scenarios, there are temporary and transient tables. Let's create these tables by running the following SQL statements:

CREATE TEMPORARY TABLE customers_temp AS SELECT * FROM customers WHERE TRY_TO_NUMBER(postal_code) IS NOT NULL; CREATE TRANSIENT TABLE customers_trans AS AS SELECT * FROM customers WHERE TRY_TO_NUMBER(postal_code) IS NULL;

- The preceding SQL script will allow you to create two tables,

customers_tempandcustomers_trans. The two tables are not permanent, but the tables have limitations. If you end the web UI session at this point, then thecustomers_temptable will not be recoverable after a re-login. Transient tables are available after a session has been closed and will retain data in a subsequent session created by user login; however, they don't consume fail-safe storage. This is an important mechanism for retaining data across sessions and can have applications in scenarios that require state management or in ETL jobs.

How it works…

REPLACE is actually a shorthand for a two-step process that's required when a table has to be deleted (dropped) and then recreated. That would typically be done by executing the two statements in sequence as follows:

DROP TABLE IF EXISTS CREATE TABLE customers …

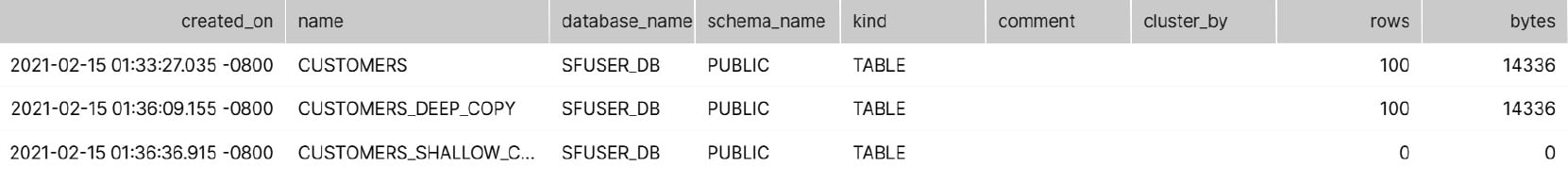

The deep and shallow copies can be explained by the following query and the result generated by Snowflake:

show tables like 'customers%';

This shows a table with three rows, each showing a summary of the three tables that we have generated previously, explaining the differences and similarities. The following table shows that the deep copies of the dataset are exactly the same while the shallow copy has been deprived of data, though the metadata is the same:

Figure 2.19 – The show tables command output showing the copies of the table

A thing to note in the preceding table is the kind column. The column is showing that the tables created have the kind attribute set as TABLE, which is the default type of table – a permanent table structure to be populated with data rows. (Please note that a select set of columns is being shown here for the sake of clarity.)

A local temporary table (also known as a volatile table) persists for the duration of the user session in which it was created and is not visible to other users. A temporary table's definition and contents are dropped at the end of the user session.

Transient tables are non-permanent tables, but unlike temporary tables, transient tables exist until explicitly dropped and are visible to any user with the appropriate privileges. Transient tables have a lower level of data protection than permanent tables. Data in a transient table may be lost in the event of a system failure. Transient tables should only be used for data that can be recreated in the event that the data is lost.

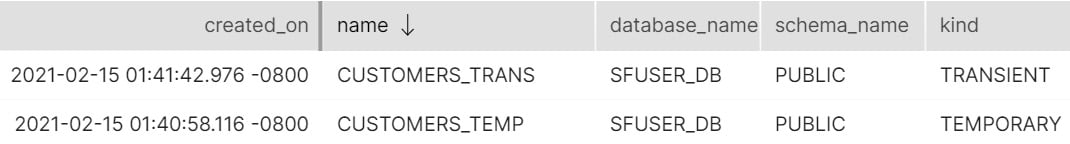

A show tables command for the customers_temp and customers_trans tables will show a table similar to the following table (please note that a limited set of columns is shown here for the sake of clarity):

Figure 2.20 – The show tables command output highlighting the temporary and transient nature of tables

Please note the content for the kind column. It shows that the tables are not permanent.

There's more…

One aspect of CREATE TABLE statements, CLONE, has been left for discussion in Chapter 8, Back to the Future with Time Travel. Temporary tables, however, cannot be cloned. Only permanent tables are fail-safe. Fail-safes will be discussed further in later chapters.