Performing mean or median imputation

Mean or median imputation consists of replacing missing data with the variable’s mean or median value. To avoid data leakage, we determine the mean or median using the train set, and then use these values to impute the train and test sets, and all future data.

Scikit-learn and Feature-engine learn the mean or median from the train set and store these parameters for future use out of the box.

In this recipe, we will perform mean and median imputation using pandas, scikit-learn, and feature-engine.

Note

Use mean imputation if variables are normally distributed and median imputation otherwise. Mean and median imputation may distort the variable distribution if there is a high percentage of missing data.

How to do it...

Let’s begin this recipe:

- First, we’ll import

pandasand the required functions and classes fromscikit-learnandfeature-engine:import pandas as pd from sklearn.model_selection import train_test_split from sklearn.impute import SimpleImputer from sklearn.compose import ColumnTransformer from feature_engine.imputation import MeanMedianImputer

- Let’s load the dataset that we prepared in the Technical requirements section:

data = pd.read_csv("credit_approval_uci.csv") - Let’s split the data into train and test sets with their respective targets:

X_train, X_test, y_train, y_test = train_test_split( data.drop("target", axis=1), data["target"], test_size=0.3, random_state=0, ) - Let’s make a list with the numerical variables by excluding variables of type object:

numeric_vars = X_train.select_dtypes( exclude="O").columns.to_list()

If you execute

numeric_vars, you will see the names of the numerical variables:['A2', 'A3', 'A8', 'A11', 'A14', 'A15']. - Let’s capture the variables’ median values in a dictionary:

median_values = X_train[ numeric_vars].median().to_dict()

Tip

Note how we calculate the median using the train set. We will use these values to replace missing data in the train and test sets. To calculate the mean, use pandas mean() instead of median().

If you execute median_values, you will see a dictionary with the median value per variable: {'A2': 28.835, 'A3': 2.75, 'A8': 1.0, 'A11': 0.0, 'A14': 160.0, 'A15': 6.0}.

- Let’s replace missing data with the median:

X_train_t = X_train.fillna(value=median_values) X_test_t = X_test.fillna(value=median_values)

If you execute

X_train_t[numeric_vars].isnull().sum()after the imputation, the number of missing values in the numerical variables should be0.

Note

pandas fillna() returns a new dataset with imputed values by default. To replace missing data in the original DataFrame, set the inplace parameter to True: X_train.fillna(value=median_values, inplace=True).

Now, let’s impute missing values with the median using scikit-learn.

- Let’s set up the imputer to replace missing data with the median:

imputer = SimpleImputer(strategy="median")

Note

To perform mean imputation, set SimpleImputer() as follows: imputer = SimpleImputer(strategy = "mean").

- We restrict the imputation to the numerical variables by using

ColumnTransformer():ct = ColumnTransformer( [("imputer", imputer, numeric_vars)], remainder="passthrough", force_int_remainder_cols=False, ).set_output(transform="pandas")

Note

Scikit-learn can return numpy arrays, pandas DataFrames, or polar frames, depending on how we set out the transform output. By default, it returns numpy arrays.

- Let’s fit the imputer to the train set so that it learns the median values:

ct.fit(X_train)

- Let’s check out the learned median values:

ct.named_transformers_.imputer.statistics_

The previous command returns the median values per variable:

array([ 28.835, 2.75, 1., 0., 160., 6.])

- Let’s replace missing values with the median:

X_train_t = ct.transform(X_train) X_test_t = ct.transform(X_test)

- Let’s display the resulting training set:

print(X_train_t.head())

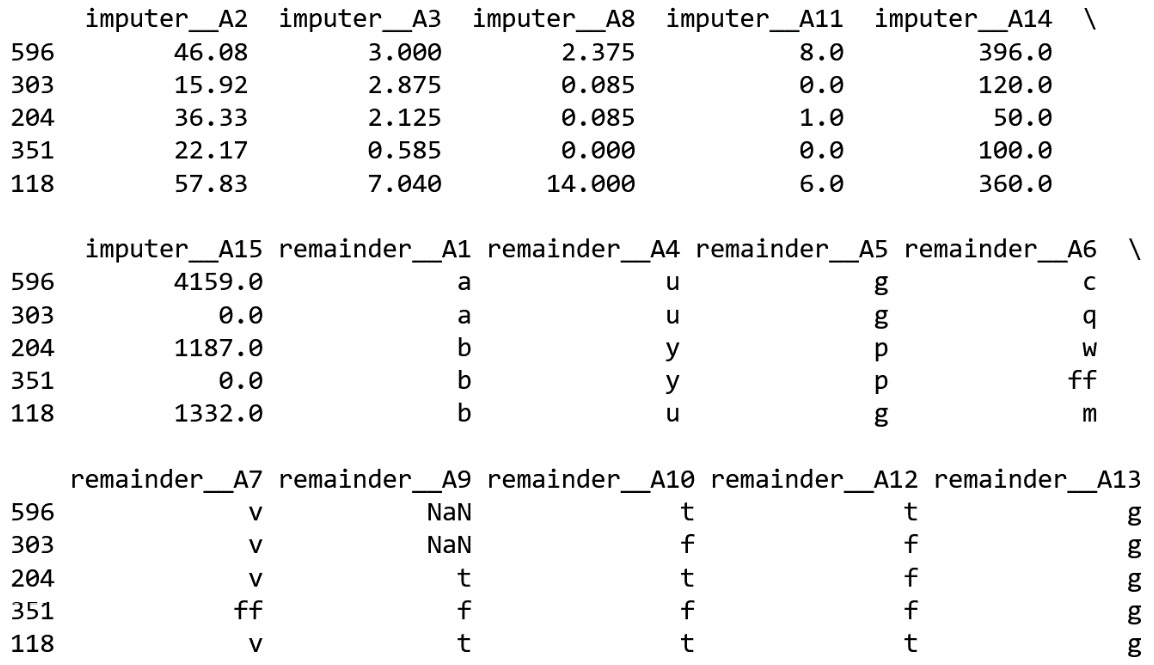

We see the resulting DataFrame in the following image:

Figure 1.3 – Training set after the imputation. The imputed variables are marked by the imputer prefix; the untransformed variables show the prefix remainder

Finally, let’s perform median imputation using feature-engine.

- Let’s set up the imputer to replace missing data in numerical variables with the median:

imputer = MeanMedianImputer( imputation_method="median", variables=numeric_vars, )

Note

To perform mean imputation, change imputation_method to "mean". By default MeanMedianImputer() will impute all numerical variables in the DataFrame, ignoring categorical variables. Use the variables argument to restrict the imputation to a subset of numerical variables.

- Fit the imputer so that it learns the median values:

imputer.fit(X_train)

- Inspect the learned medians:

imputer.imputer_dict_

The previous command returns the median values in a dictionary:

{'A2': 28.835, 'A3': 2.75, 'A8': 1.0, 'A11': 0.0, 'A14': 160.0, 'A15': 6.0} - Finally, let’s replace the missing values with the median:

X_train = imputer.transform(X_train) X_test = imputer.transform(X_test)

Feature-engine’s MeanMedianImputer() returns a DataFrame. You can check that the imputed variables do not contain missing values using X_train[numeric_vars].isnull().mean().

How it works...

In this recipe, we replaced missing data with the variable’s median values using pandas, scikit-learn, and feature-engine.

We divided the dataset into train and test sets using scikit-learn’s train_test_split() function. The function takes the predictor variables, the target, the fraction of observations to retain in the test set, and a random_state value for reproducibility, as arguments. It returned a train set with 70% of the original observations and a test set with 30% of the original observations. The 70:30 split was done at random.

To impute missing data with pandas, in step 5, we created a dictionary with the numerical variable names as keys and their medians as values. The median values were learned from the training set to avoid data leakage. To replace missing data, we applied pandas’ fillna() to train and test sets, passing the dictionary with the median values per variable as a parameter.

To replace the missing values with the median using scikit-learn, we used SimpleImputer() with the strategy set to "median". To restrict the imputation to numerical variables, we used ColumnTransformer(). With the remainder argument set to passthrough, we made ColumnTransformer() return all the variables seen in the training set in the transformed output; the imputed ones followed by those that were not transformed.

Note

ColumnTransformer() changes the names of the variables in the output. The transformed variables show the prefix imputer and the unchanged variables show the prefix remainder.

In step 8, we set the output of the column transformer to pandas to obtain a DataFrame as a result. By default, ColumnTransformer() returns numpy arrays.

Note

From version 1.4.0, scikit-learn transformers can return numpy arrays, pandas DataFrames, or polar frames as a result of the transform() method.

With fit(), SimpleImputer() learned the median of each numerical variable in the train set and stored them in its statistics_ attribute. With transform(), it replaced the missing values with the medians.

To replace missing values with the median using Feature-engine, we used the MeanMedianImputer() with the imputation_method set to median. To restrict the imputation to a subset of variables, we passed the variable names in a list to the variables parameter. With fit(), the transformer learned and stored the median values per variable in a dictionary in its imputer_dict_ attribute. With transform(), it replaced the missing values, returning a pandas DataFrame.