Loading your data

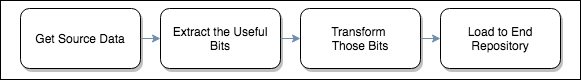

As we have outlined in previous chapters, traditional system engineering commonly adopts a pattern to move the data from its source to its destination, that is, ETL, whereas Spark tends to rely on schema-on-read. As it's important to understand how these concepts relate to schemas and input formats, let's describe this aspect in more detail:

On the face of it, the ETL approach seems to be sensible, and indeed has been implemented by just about every organization that stores and handles data. There are some very popular, feature-rich products out there that perform the ETL task very well - not to mention Apache's open source offering, Apache Camel http://camel.apache.org/etl-example.html.

However, this apparently straightforward approach belies the true effort required to implement even a simple data pipeline. This is because we must ensure that all data complies with a fixed schema before we can use it. For example, if we wanted to ingest some data from a starting directory...