Gradient boosting classifier

Gradient boosting is one of the competition-winning algorithms that work on the principle of boosting weak learners iteratively by shifting focus towards problematic observations that were difficult to predict in previous iterations and performing an ensemble of weak learners, typically decision trees. It builds the model in a stage-wise fashion like other boosting methods do, but it generalizes them by allowing optimization of an arbitrary differentiable loss function.

Let's start understanding Gradient Boosting with a simple example, as GB challenges many data scientists in terms of understanding the working principle:

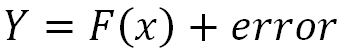

- Initially, we fit the model on observations producing 75% accuracy and the remaining unexplained variance is captured in the error term:

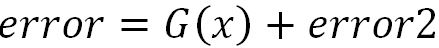

- Then we will fit another model on the error term to pull the extra explanatory component and add it to the original model, which should improve the overall accuracy:

- Now, the model is providing 80% accuracy and...