In fact, we have a whole zoo of machine learning algorithms with popularity varying over time. We can roughly categorize them into four main approaches: logic-based learning, statistical learning, artificial neural networks, and genetic algorithms.

The logic-based systems were the first to be dominant. They used basic rules specified by human experts, and with these rules, systems tried to reason using formal logic, background knowledge, and hypotheses. In the mid-1980s, artificial neural networks (ANN) came to the foreground, to be then pushed aside by statistical learning systems in the 1990s. Artificial neural networks imitate animal brains, and consist of interconnected neurons that are also an imitation of biological neurons. They try to model complex relationships between inputs and outputs and to capture patterns in data. Genetic algorithms (GA) were popular in the 1990s. They mimic the biological process of evolution and try to find the optimal solutions using methods such as mutation and crossover.

We are currently (2017) seeing a revolution in deep learning, which we may consider to be a rebranding of neural networks. The term deep learning was coined around 2006, and refers to deep neural networks with many layers. The breakthrough in deep learning is amongst others caused by the integration and utilization of graphical processing units (GPU), which massively speed up computation. GPUs were originally developed to render video games, and are very good in parallel matrix and vector algebra. It is believed that deep learning resembles the way humans learn, therefore may be able to deliver on the promise of sentient machines.

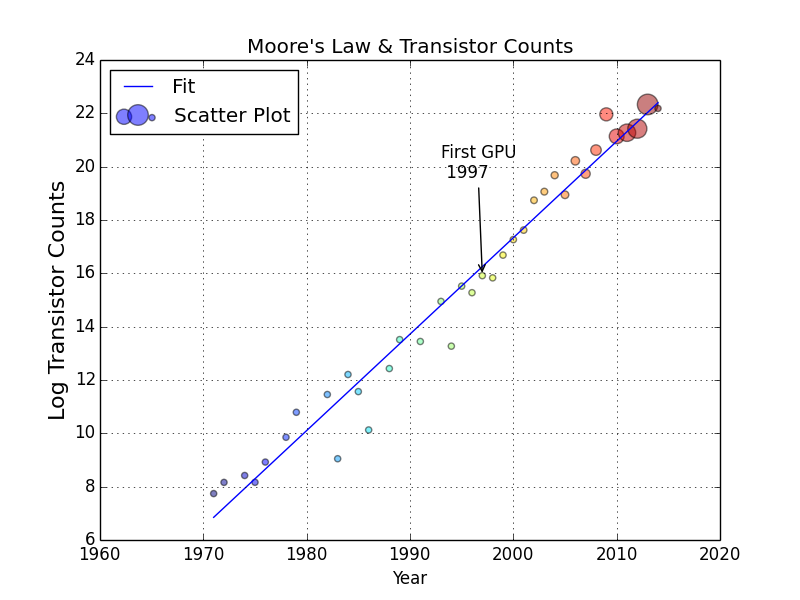

Some of us may have heard of Moore's law-an empirical observation claiming that computer hardware improves exponentially with time. The law was first formulated by Gordon Moore, the co-founder of Intel, in 1965. According to the law, the number of transistors on a chip should double every two years. In the following graph, you can see that the law holds up nicely (the size of the bubbles corresponds to the average transistor count in GPUs):

The consensus seems to be that Moore's law should continue to be valid for a couple of decades. This gives some credibility to Ray Kurzweil's predictions of achieving true machine intelligence in 2029.