How virtual reality really works

So, what is it about VR that's got everyone so excited? With your headset on, you experience synthetic scenes. It appears 3D, it feels 3D, and maybe you even have a sense of actually being there inside the virtual world. The strikingly obvious thing is: VR looks and feels really cool! But why?

Immersion and presence are the two words used to describe the quality of a VR experience. The Holy Grail is to increase both to the point where it seems so real, you forget you're in a virtual world. Immersion is the result of emulating the sensory input that your body receives (visual, auditory, motor, and so on). This can be explained technically. Presence is the visceral feeling that you get being transported there—a deep emotional or intuitive feeling. You could say that immersion is the science of VR and presence is art. And that, my friend, is cool.

A number of different technologies and techniques come together to make the VR experience work, which can be separated into two basic areas:

- 3D viewing

- Head-pose tracking

In other words, displays and sensors, like those built into today's mobile devices, are a big reason why VR is possible and affordable today.

Suppose the VR system knows exactly where your head is positioned at any given moment in time. Suppose that it can immediately render and display the 3D scene for this precise viewpoint stereoscopically. Then, wherever and whenever you move, you'll see the virtual scene exactly as you should. You will have a nearly perfect visual VR experience. That's basically it. Ta-dah!

Well, not so fast. Literally.

Stereoscopic 3D viewing

Split-screen stereography was discovered not long after the invention of photography, like the popular stereograph viewer from 1876 shown in the following picture (B.W. Kilborn & Co, Littleton, New Hampshire; see http://en.wikipedia.org/wiki/Benjamin_W._Kilburn). A stereo photograph has separate views for the left and right eyes, which are slightly offset to create parallax. This fools the brain into thinking that it's a truly three-dimensional view. The device contains separate lenses for each eye, which let you easily focus on the photo close up:

Similarly, rendering these side-by-side stereo views is the first job of the VR-enabled camera in Unity.

Let's say that you're wearing a VR headset and you're holding your head very still so that the image looks frozen. It still appears better than a simple stereograph. Why?

The old-fashioned stereograph has relatively small twin images rectangularly bound. When your eye is focused on the center of the view, the 3D effect is convincing, but you will see the boundaries of the view. Move your eyes around (even with your head still), and any remaining sense of immersion is totally lost. You're just an observer on the outside peering into a diorama.

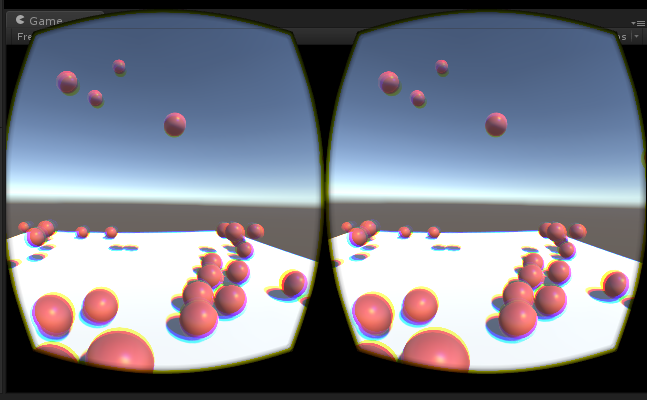

Now, consider what a VR screen looks like without the headset (see the following screenshot):

The first thing that you will notice is that each eye has a barrel-shaped view. Why is that? The headset lens is a very wide-angle lens. So, when you look through it, you have a nice wide field of view. In fact, it is so wide (and tall), it distorts the image (pincushion effect). The graphics software SDK does an inverse of that distortion (barrel distortion) so that it looks correct to us through the lenses. This is referred to as an ocular distortion correction. The result is an apparent field of view (FOV) that is wide enough to include a lot more of your peripheral vision. For example, the Oculus Rift has a FOV of about 100 degrees.

Also, of course, the view angle from each eye is slightly offset, comparable to the distance between your eyes or the Inter Pupillary Distance (IPD). IPD is used to calculate the parallax and can vary from one person to the next. (The Oculus Configuration Utility comes with a utility to measure and configure your IPD. Alternatively, you can ask your eye doctor for an accurate measurement.)

It might be less obvious, but if you look closer at the VR screen, you will see color separations, as you'd get from a color printer whose print head is not aligned properly. This is intentional. Light passing through a lens is refracted at different angles based on the wavelength of the light. Again, the rendering software does an inverse of the color separation so that it looks correct to us. This is referred to as a chromatic aberration correction. It helps make the image look really crisp.

The resolution of the screen is also important to get a convincing view. If it's too low-res, you'll see the pixels, or what some refer to as a screen-door effect. The pixel width and height of the display is an oft-quoted specification when comparing the HMDs, but the pixels per inch (PPI) value may be more important. Other innovations in display technology such as pixel smearing and foveated rendering (showing higher-resolution details exactly where the eyeball is looking) will also help reduce the screen-door effect.

When experiencing a 3D scene in VR, you must also consider the frames per second (FPS). If the FPS is too slow, the animation will look choppy. Things that affect FPS include the GPU performance and the complexity of the Unity scene (the number of polygons and lighting calculations), among other factors. This is compounded in VR because you need to draw the scene twice, once for each eye. Technology innovations, such as GPUs optimized for VR, frame interpolation, and other techniques will improve the frame rates. For us, developers, performance-tuning techniques in Unity, such as those used by mobile game developers, can be applied in VR. These techniques and optics help make the 3D scene appear realistic.

Sound is also very important—more important than many people realize. VR should be experienced while wearing stereo headphones. In fact, when the audio is done well but the graphics are pretty crappy, you can still have a great experience. We see this a lot in TV and cinema. The same holds true in VR. Binaural audio gives each ear its own stereo view of a sound source in such a way that your brain imagines its location in 3D space. No special listening devices are needed. Regular headphones will work (speakers will not). For example, put on your headphones and visit the Virtual Barber Shop at https://www.youtube.com/watch?v=IUDTlvagjJA. True 3D audio provides an even more realistic spatial audio rendering, where sounds bounce off nearby walls and can be occluded by obstacles in the scene to enhance the first-person experience and realism.

Lastly, the VR headset should fit your head and face comfortably so that it's easy to forget that you're wearing it, and it should block out light from the real environment around you.

Head tracking

So, we have a nice 3D picture that is viewable in a comfortable VR headset with a wide field of view. If this was it and you moved your head, it'd feel like you had a diorama box stuck to your face. Move your head and the box moves along with it, and this is much like holding the antique stereograph device or the childhoodView-Master. Fortunately, VR is so much better.

The VR headset has a motion sensor (IMU) inside that detects spatial acceleration and rotation rates on all three axes, providing what's called the six degrees of freedom. This is the same technology that is commonly found in mobile phones and some console game controllers. Mounted on your headset, when you move your head, the current viewpoint is calculated and used when the next frame's image is drawn. This is referred to as motion detection.

The previous generation of mobile motion sensors was good enough for us to play mobile games on a phone, but for VR, it's not accurate enough. These inaccuracies (rounding errors) accumulate over time, as the sensor is sampled thousands of times per second and one may eventually lose track of where they were in the real world. This drift was a major shortfall of the older, phone-based Google Cardboard VR. It could sense your head's motion, but it lost track of your head's orientation. The current generation of phones, such as Google Pixel and Samsung Galaxy, which conform to the Daydream specifications, have upgraded sensors.

High-end HMDs account for drift with a separate positional tracking mechanism. The Oculus Rift does this with inside-out positional tracking, where an array of (invisible) infrared LEDs on the HMD are read by an external optical sensor (infrared camera) to determine your position. You need to remain within the view of the camera for the head tracking to work.

Alternatively, the Steam VR VIVE Lighthouse technology does outside-in positional tracking, where two or more dumb laser emitters are placed in the room (much like the lasers in a barcode reader at the grocery checkout), and an optical sensor on the headset reads the rays to determine your position.

Windows MR headsets use no external sensors or cameras. Rather, there are integrated cameras and sensors to perform spatial mapping of the local environment around you, in order to locate and track your position in the real-world 3D space.

Either way, the primary purpose is to accurately find the position of your head and other similarly equipped devices, such as handheld controllers.

Together, the position, tilt, and the forward direction of your head—or the head pose—are used by the graphics software to redraw the 3D scene from this vantage point. Graphics engines such as Unity are really good at this.

Now, let's say that the screen is getting updated at 90 FPS, and you're moving your head. The software determines the head pose, renders the 3D view, and draws it on the HMD screen. However, you're still moving your head. So, by the time it's displayed, the image is a little out of date with respect to your current position. This is called latency, and it can make you feel nauseous.

Motion sickness caused by latency in VR occurs when you're moving your head and your brain expects the world around you to change exactly in sync. Any perceptible delay can make you uncomfortable, to say the least.

Latency can be measured as the time from reading a motion sensor to rendering the corresponding image, or the sensor-to-pixel delay. According to Oculus's John Carmack:

A total latency of 50 milliseconds will feel responsive, but still noticeable laggy. 20 milliseconds or less will provide the minimum level of latency deemed acceptable.

There are a number of very clever strategies that can be used to implement latency compensation. The details are outside the scope of this book and inevitably will change as device manufacturers improve on the technology. One of these strategies is what Oculus calls the timewarp, which tries to guess where your head will be by the time the rendering is done and uses that future head pose instead of the actual detected one. All of this is handled in the SDK, so as a Unity developer, you do not have to deal with it directly.

Meanwhile, as VR developers, we need to be aware of latency as well as the other causes of motion sickness. Latency can be reduced via the faster rendering of each frame (keeping the recommended FPS). This can be achieved by discouraging your head from moving too quickly and using other techniques to make yourself feel grounded and comfortable.

Another thing that the Rift does to improve head tracking and realism is that it uses a skeletal representation of the neck so that all the rotations that it receives are mapped more accurately to the head rotation. For example, looking down at your lap creates a small forward translation since it knows it's impossible to rotate one's head downwards on the spot.

Other than head tracking, stereography, and 3D audio, virtual reality experiences can be enhanced with body tracking, hand tracking (and gesture recognition), locomotion tracking (for example, VR treadmills), and controllers with haptic feedback. The goal of all of this is to increase your sense of immersion and presence in the virtual world.