Creating a DataFrame

With Spark session object, applications can create DataFrames from an existing RDD, a Hive table, or a number of data sources we mentioned earlier in Chapter 3, ELT with Spark. We have looked at creating DataFrames in our previous chapter especially from TextFiles and JSON documents. We are going to use a Call Detail Records (CDR) dataset for some basic data manipulation with DataFrames. The dataset is available from this book's website if you want to use the same dataset for your practice.

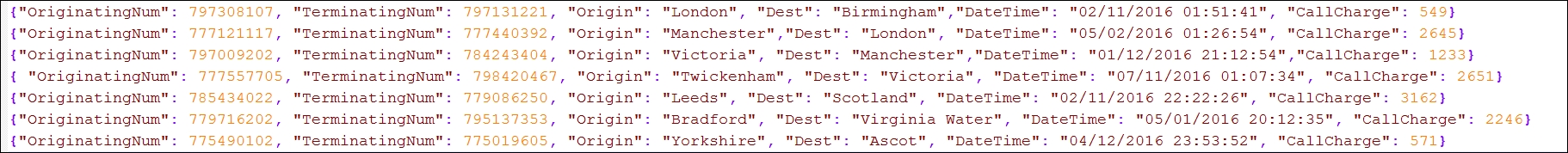

A sample of the data set looks like the following screenshot:

Figure 4.8: Sample CDRs data set

Manipulating a DataFrame

We are going to perform the following actions on this data set:

- Load the dataset as a DataFrame.

- Print the top 20 records from the data frame.

- Display Schema.

- Count total number of calls originating from London.

- Count total revenue with calls originating from revenue and terminating in Manchester.

- Register the dataset as a table to be operated on using SQL.