Model overfitting and bias-variance tradeoff

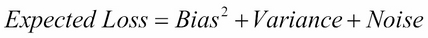

The expected loss mentioned in the previous section can be written as a sum of three terms in the case of linear regression using squared loss function, as follows:

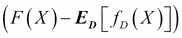

Here, Bias is the difference  between the true model F(X) and average value of

between the true model F(X) and average value of  taken over an ensemble of datasets. Bias is a measure of how much the average prediction over all datasets in the ensemble differs from the true regression function F(X). Variance is given by

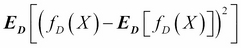

taken over an ensemble of datasets. Bias is a measure of how much the average prediction over all datasets in the ensemble differs from the true regression function F(X). Variance is given by  . It is a measure of extent to which the solution for a given dataset varies around the mean over all datasets. Hence, Variance is a measure of how much the function

. It is a measure of extent to which the solution for a given dataset varies around the mean over all datasets. Hence, Variance is a measure of how much the function  is sensitive to the particular choice of dataset D. The third term Noise, as mentioned earlier, is the expectation of difference

is sensitive to the particular choice of dataset D. The third term Noise, as mentioned earlier, is the expectation of difference  between observation and the true regression function, over all the values of X and Y. Putting all these together, we can write the following:

between observation and the true regression function, over all the values of X and Y. Putting all these together, we can write the following:

The objective of machine learning is to learn the function  from data that minimizes...

from data that minimizes...