Chapter 3: Introduction to Neural Networks

Activity 4: Sentiment Analysis of Reviews

Solution:

- Open a new Jupyter notebook. Import numpy, pandas and matplotlib.pyplot. Load the dataset into a dataframe.

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

dataset = pd.read_csv('train_comment_small_100.csv', sep=',')

- Next step is to clean and prepare the data. Import re and nltk. From nltk.corpus import stopwords. From nltk.stem.porter, import PorterStemmer. Create an array for your cleaned text to be stored in.

import re

import nltk

nltk.download('stopwords')

from nltk.corpus import stopwords

from nltk.stem.porter import PorterStemmer

corpus = []

- Using a for loop, iterate through every instance (every review). Replace all non-alphabets with a ' ' (whitespace). Convert all alphabets into lowercase. Split each review into individual words. Initiate the PorterStemmer. If the word is not a stopword, perform stemming on the word. Join all the individual words back together to form a cleaned review. Append this cleaned review to the array you created.

for i in range(0, dataset.shape[0]-1):

review = re.sub('[^a-zA-Z]', ' ', dataset['comment_text'][i])

review = review.lower()

review = review.split()

ps = PorterStemmer()

review = [ps.stem(word) for word in review if not word in set(stopwords.words('english'))]

review = ' '.join(review)

corpus.append(review)

- Import CountVectorizer. Convert the reviews into word count vectors using CountVectorizer.

from sklearn.feature_extraction.text import CountVectorizer

cv = CountVectorizer(max_features = 20)

- Create an array to store each unique word as its own column, hence making them independent variables.

X = cv.fit_transform(corpus).toarray()

y = dataset.iloc[:,0]

y1 = y[:99]

y1

- Import LabelEncoder from sklearn.preprocessing. Use the LabelEncoder on the target output (y).

from sklearn import preprocessing

labelencoder_y = preprocessing.LabelEncoder()

y = labelencoder_y.fit_transform(y1)

- Import train_test_split. Divide the dataset into a training set and a validation set.

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.20, random_state = 0)

- Import StandardScaler from sklearn.preprocessing. Use the StandardScaler on the features of both the training set and the validation set (X).

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

- Now the next task is to create the neural network. Import keras. Import Sequential from keras.models and Dense from Keras layers.

import tensorflow

import keras

from keras.models import Sequential

from keras.layers import Dense

- Initialize the neural network. Add the first hidden layer with 'relu' as the activation function. Repeat step for the second hidden layer. Add the output layer with 'softmax' as the activation function. Compile the neural network, using 'adam' as the optimizer, 'binary_crossentropy' as the loss function and 'accuracy' as the performance metric.

classifier = Sequential()

classifier.add(Dense(output_dim = 20, init = 'uniform', activation = 'relu', input_dim = 20))

classifier.add(Dense(output_dim =20, init = 'uniform', activation = 'relu'))

classifier.add(Dense(output_dim = 1, init = 'uniform', activation = 'softmax'))

classifier.compile(optimizer = 'adam', loss = 'binary_crossentropy', metrics = ['accuracy'])

- Now we need to train the model. Fit the neural network on the training dataset with a batch_size of 3 and a nb_epoch of 5.

classifier.fit(X_train, y_train, batch_size = 3, nb_epoch = 5)

X_test

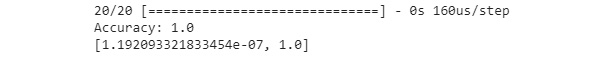

- Validate the model. Evaluate the neural network and print the accuracy scores to see how it's doing.

y_pred = classifier.predict(X_test)

scores = classifier.evaluate(X_test, y_pred, verbose=1)

print("Accuracy:", scores[1])

- (Optional) Print the confusion matrix by importing confusion_matrix from sklearn.metrics.

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

scores

Your output should look similar to this: